Are AI Agents Safe in the Workplace? AI-Ready Data Risks You Need to Know

Table of Contents

Hello. We are CUBIG, dedicated to helping enterprises make their data practically usable for AI.

The trend in AI technology has clearly shifted over the past few years. While AI used to be closer to a tool for generating text or summarizing information, it is now rapidly expanding into AI Agents that execute actual tasks.

Cases of AI automating workflows by controlling browsers, collecting and organizing information through messenger integrations, and performing specific business processes are increasing. This shift certainly shows new possibilities for enterprises, as AI has moved beyond a simple assistive tool and entered the actual execution environment.

However, at the same time, a previously overlooked issue has begun to resurface. That issue is the data environment.

Actual Security Vulnerabilities in AI Agents

The security industry is pointing out similar issues. According to Kaspersky, multiple critical vulnerabilities were identified in the open-source AI agent OpenClaw.

These vulnerabilities could allow attackers to execute malicious code, steal authentication tokens, and gain control over the local environment.

In particular, the report highlights that simply interacting with a compromised input or environment could lead to unauthorized system access.

Because AI agents are directly connected to services such as email, file systems, and calendars, a single exploit can escalate quickly — potentially leading to large-scale data exposure.

📃Read the full case : Kaspersky — OpenClaw Vulnerabilities Analysis

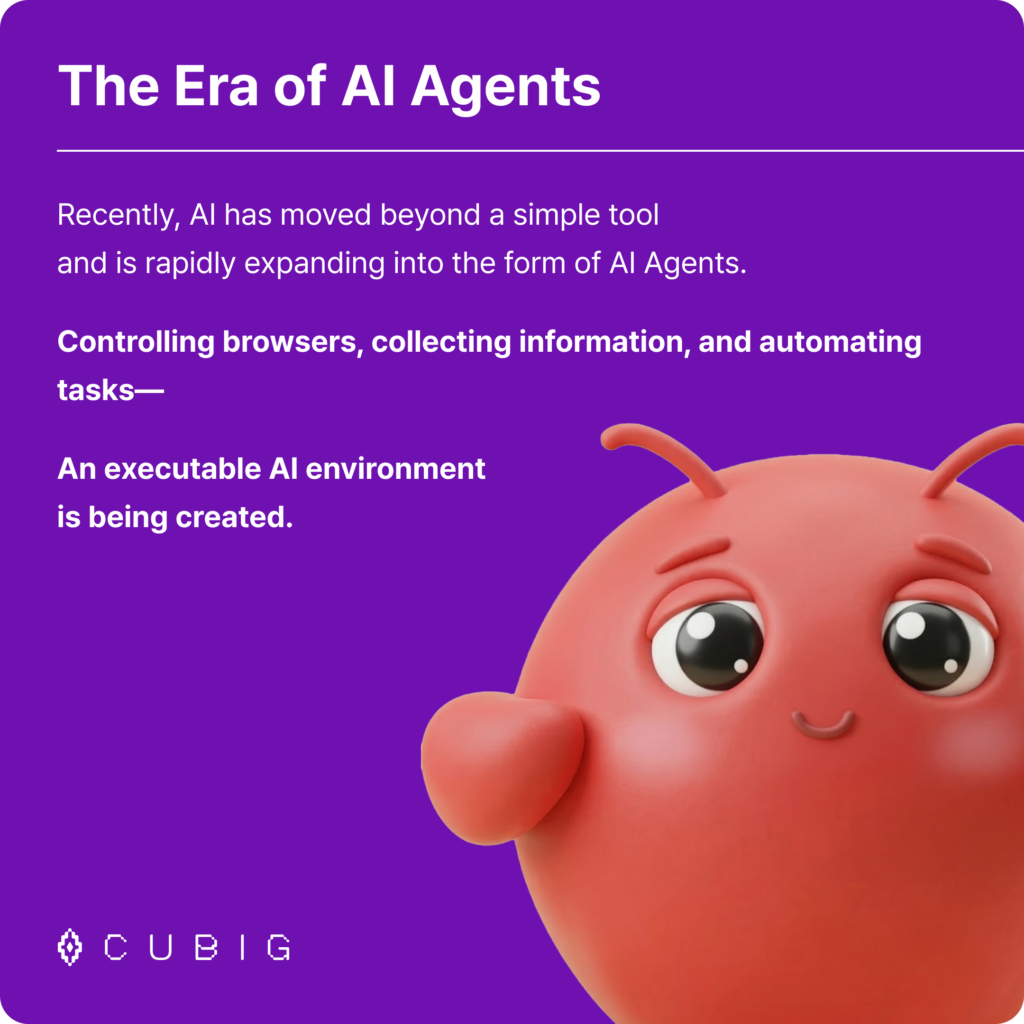

Recently, an overseas case reported that an enterprise’s internal AI system, which was connected to an AI agent, was breached in just two hours. Access to the internal system was made possible by exploiting traditional security flaws, such as unauthenticated APIs and SQL Injection vulnerabilities.

During this process, it was confirmed that attackers could potentially access:

- 46 millions of chat logs

- Hundreds of thousands of internal files

- Data structures used by the AI

📃 Read the full case : McKinsey AI platform breach analysis

Why Connecting AI Agents Directly Is Risky

The most defining feature of an AI agent is that it doesn’t just generate answers—it actually uses the system. It opens browsers to search for information, reads and organizes internal documents, modifies and transmits files, and automatically executes tasks by integrating with external services.

In other words, the AI operates just like a human user within the business system. Because of this structure, directly connecting AI agents to business systems can cause the following problems:

- Exposure of Sensitive Information: Personal data or internal confidential information might be included in the prompts or documents delivered to the AI.

- Data Policy Management Issues: Every enterprise has different data usage policies and regulatory standards, but AI agents do not inherently understand these rules.

- Difficulty Controlling Data Usage: It can be incredibly difficult to track exactly what data was sent to the AI and what information was processed.

As a result, many companies experience this common hurdle during AI adoption: The AI technology is ready, but the data is not ready to be directly connected.

What Is an AI-Ready Data Environment

To utilize AI in an enterprise environment, the data environment must be prepared before the AI model itself. Particularly when using AI with system access privileges, like AI agents, the data must be connected to the AI in an AI-Ready state.An AI-Ready data environment doesn’t just mean the data exists. It means:

- Sensitive information is strictly managed.

- Data usage policies are applied.

- The data delivered to the AI is controlled.

In short, a management layer is required between the AI and your data.

How to Use AI Agents Safely: CUBIG’s LLM Capsule

CUBIG’s LLM Capsule provides a data layer that detects and processes information according to your policies before enterprise data is delivered to the AI system.

Here is how the LLM Capsule works:

- It automatically detects sensitive information within the input data.

- It de-identifies or masks the data according to enterprise policies.

- If necessary, it applies data filtering policies to meet industry regulatory standards.

Through this process, enterprises can manage their data to ensure it is delivered to the AI system in an AI-Ready state without having to completely block data flow. This approach isn’t about preventing the use of AI; it’s about creating a data environment where AI can be used safely.

The Crucial Question in the Era of AI Agents

Many enterprises are already experimenting with AI agents in various forms. AI agents are spreading quickly across areas like workflow automation, data analysis, and customer service.

The question enterprises need to ask themselves now is not simply whether to use AI agents. The more important question is: In what data environment will you use AI?

The Enterprise AI Environment Starts with Data Preparation

To leverage AI agents in an enterprise environment, data shouldn’t just be stored; it must be managed in a state that the AI can actually use. Particularly in architectures where AI systems connect directly to enterprise data, protecting sensitive information and applying data policies must be addressed together.

LLM Capsule provides the essential data layer that supports data policy management and sensitive information protection as enterprise data connects to AI. With this, enterprises can manage their data to seamlessly connect with AI systems in an AI-Ready state, without completely cutting off data access.

Assess your enterprise data environment today and start designing an AI-Ready data structure.

FAQ

Is it safe to deploy AI agents in enterprise environments?

Not without a controlled data environment. AI agents can access sensitive systems and data.

What is an AI-ready data environment?

It is a data layer that controls, filters, and manages data before AI systems use it.

#AIAgents #DataSecurity #EnterpriseAI #CUBIG #AIReady #LLMCapsule #AIgateway #DataManagement #AIReadydata #datagovernance

CUBIG's Service Line

Recommended Posts