Why Data Trust Matters More Than Data Quality in Enterprise AI

Table of Contents

Hello, this is CUBIG — a company helping enterprise data become usable for AI in real-world environments.

When we speak with organizations running AI projects, we often hear a similar question:

“If data quality improves, won’t AI naturally work better?”

This isn’t entirely wrong.

Data quality still matters. In fact, improving data quality is usually the first thing organizations do when starting an AI initiative.

Teams spend months cleaning data, validating quality, and reorganizing data pipelines.

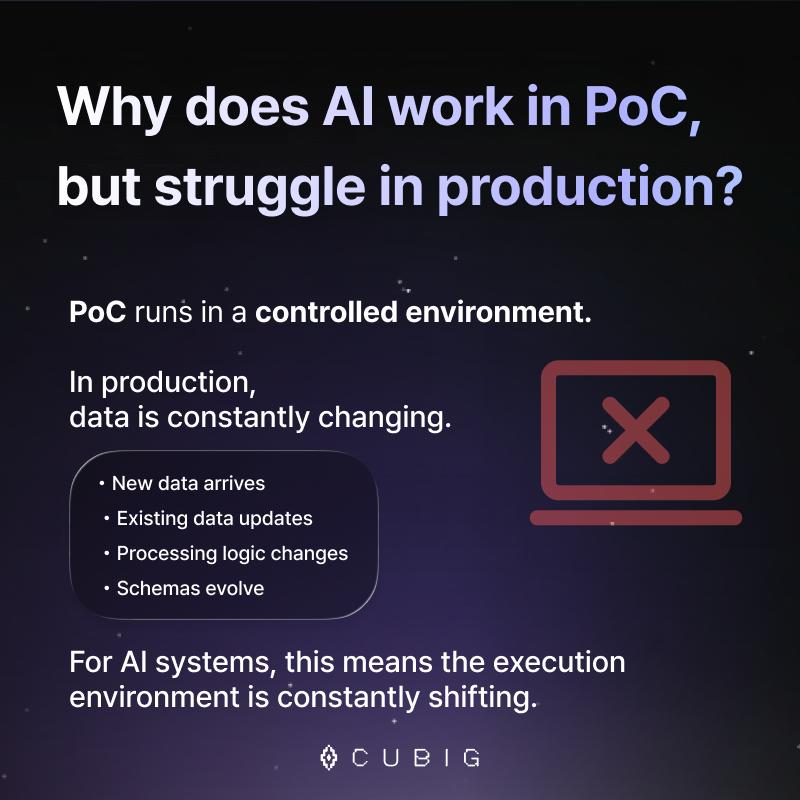

However, the situation changes once AI moves beyond experiments and enters real production environments. Models that perform well during PoC (Proof of Concept) often start to produce inconsistent results in production.

An AI system that worked perfectly yesterday may suddenly produce different outcomes today.

When this happens, most teams first investigate data quality.

They check monitoring metrics, review anomaly detection logs, and re-examine their data pipelines.

But in many cases, they discover something surprising: There is no major issue with data quality.

At that point, a more fundamental question emerges:

“What exact data state was the AI running on?”

This question is precisely where the concept of Data Trust begins.

AI Needs AI-Ready Data to Work in Production

Even with the same model, AI can produce different results depending on which data it runs on.

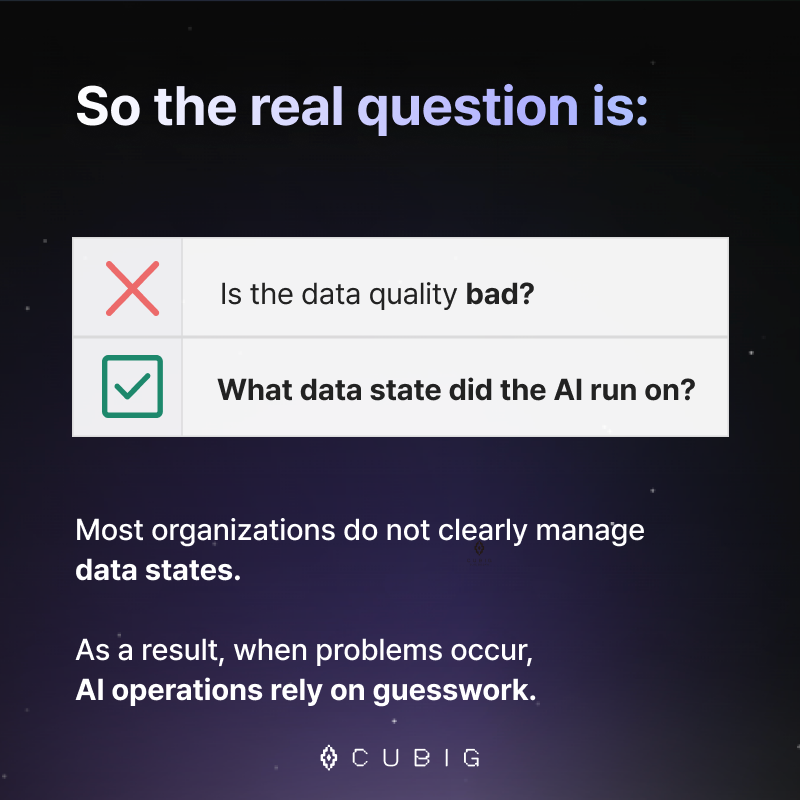

However, in most organizations, this data state is not clearly managed.

In production environments, data constantly changes.

- New data is ingested

- Existing data is updated

- Preprocessing logic changes

- Schemas evolve

Each change may appear minor.

But from the AI system’s perspective, these changes alter the execution environment.

This means the key question is no longer:

“Is the data quality bad?”

Instead, the real question becomes:

“What was the exact data state when the AI executed?”

This is why organizations are increasingly focusing on AI-Ready data.

AI-Ready data is not simply clean data.

It refers to data that is structurally prepared for stable AI execution in production environments.

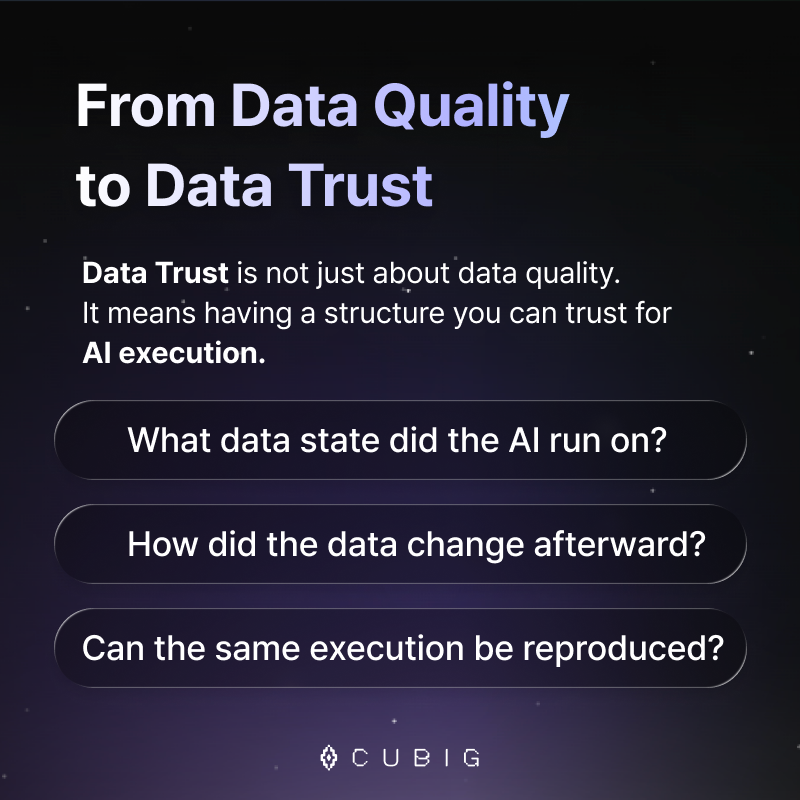

The Rise of Data Trust in the Data Platform Market

Recently, the concept of Data Trust has been gaining traction in the data platform ecosystem.

Data Trust does not simply mean that data is accurate.

Instead, it means managing the entire AI execution environment in a way that can be trusted.

An organization with Data Trust can answer three critical questions:

- Which data state was the AI executed on?

- How did the data change afterward?

- Can the same execution be reproduced?

When organizations can answer these questions, AI moves beyond experimentation and becomes a reliable operational system.

The Data Platform Market Is Moving in the Same Direction

Recent industry reports also highlight this shift. In the latest Gartner Magic Quadrant for Augmented Data Quality, AI Readiness for operational environments is becoming a key evaluation factor.

This indicates that the market is gradually moving: from Data Quality → to Data Trust

Organizations are starting to realize that the real challenge is not only building accurate AI models.

The real challenge is ensuring stable AI execution in production environments.

Emerging trends in the data platform ecosystem reflect the same shift:

· AI-assisted data management

· Agentic AI automation

· Data products

All of these trends signal the same direction:

the transition from Data Quality to Data Trust.

📃More about the Gartner MQ update

CUBIG’s Approach: The Data Execution Architecture

At CUBIG, we believe that AI production failures are not just a data quality issue.

They are fundamentally a data execution architecture problem.

Most organizations focus on monitoring data, detecting anomalies, and measuring data quality.

But in AI operations, something more important is needed:

A structure that records the exact data state used during AI execution.

Without this structure, diagnosing AI issues becomes guesswork.

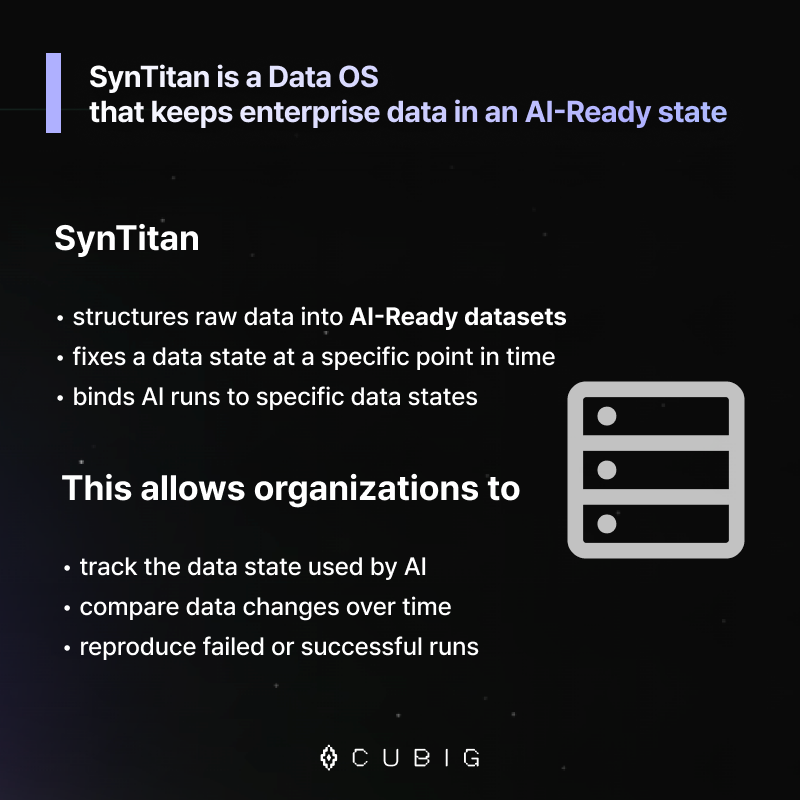

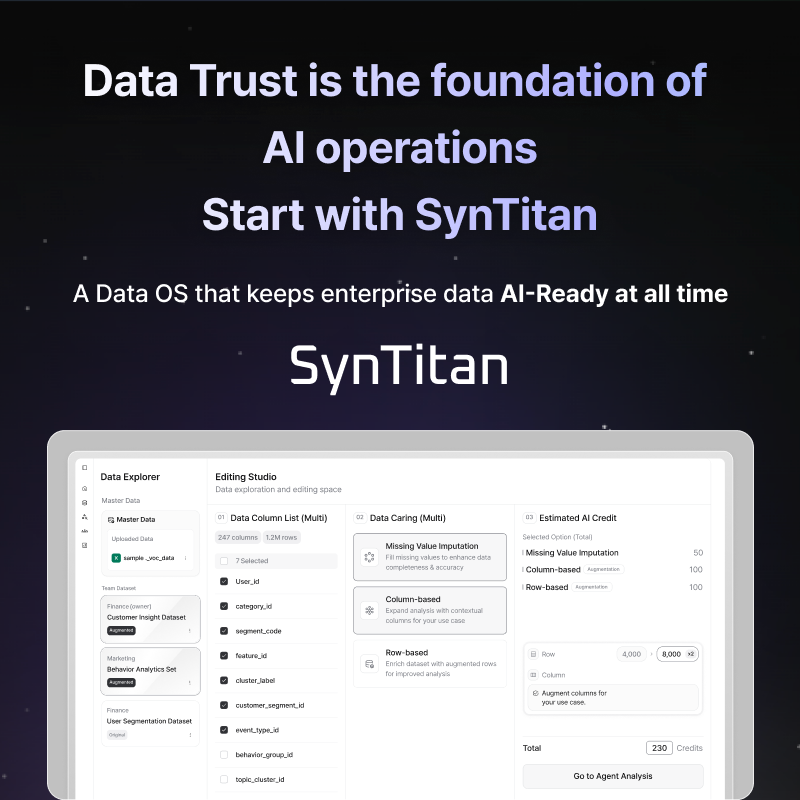

How SynTitan Enables AI-Ready Data and Data Trust

SynTitan is a Data OS designed to keep enterprise data in an AI-Ready state.

It manages data through a three-step architecture.

First, raw data is automatically transformed into an AI-Ready structure that AI systems can use.

Then, the exact data state at a specific moment is fixed as a Release State, which becomes the reference point for execution.

Finally, every AI execution is connected to this Release State through a mechanism called Run Binding.

As a result, organizations can:

- Trace which data state an AI run used

- Compare how data has changed over time

- Reproduce the exact execution when issues occur

This architecture is what enables real Data Trust in enterprise AI environments.

Data Competitiveness in the AI Era

AI projects do not stall at PoC because of model performance alone.

In many cases, the real problem lies in the data execution structure.

For AI to operate reliably in production, organizations need more than data quality.

They need AI-Ready data architecture.

And on top of that architecture, Data Trust can be established.

Only organizations with Data Trust can truly operate AI at scale.

SynTitan was built to make this possible.

Request a SynTitan Demo👇

CUBIG's Service Line

Recommended Posts