Release State vs. Model Versioning: Why Registries Aren’t Enough

Table of Contents

Hello, this is CUBIG. We help enterprise data become usable in real AI operations.

Most teams invest in model registries early.

They track artifacts, log parameters, record who deployed what and when.

The registry fills up. The process feels controlled.

Then a model behaves differently in production. The version number hasn’t changed. The code looks the same. And no one can explain why the output shifted.

The registry did its job. The problem sits one layer below it.

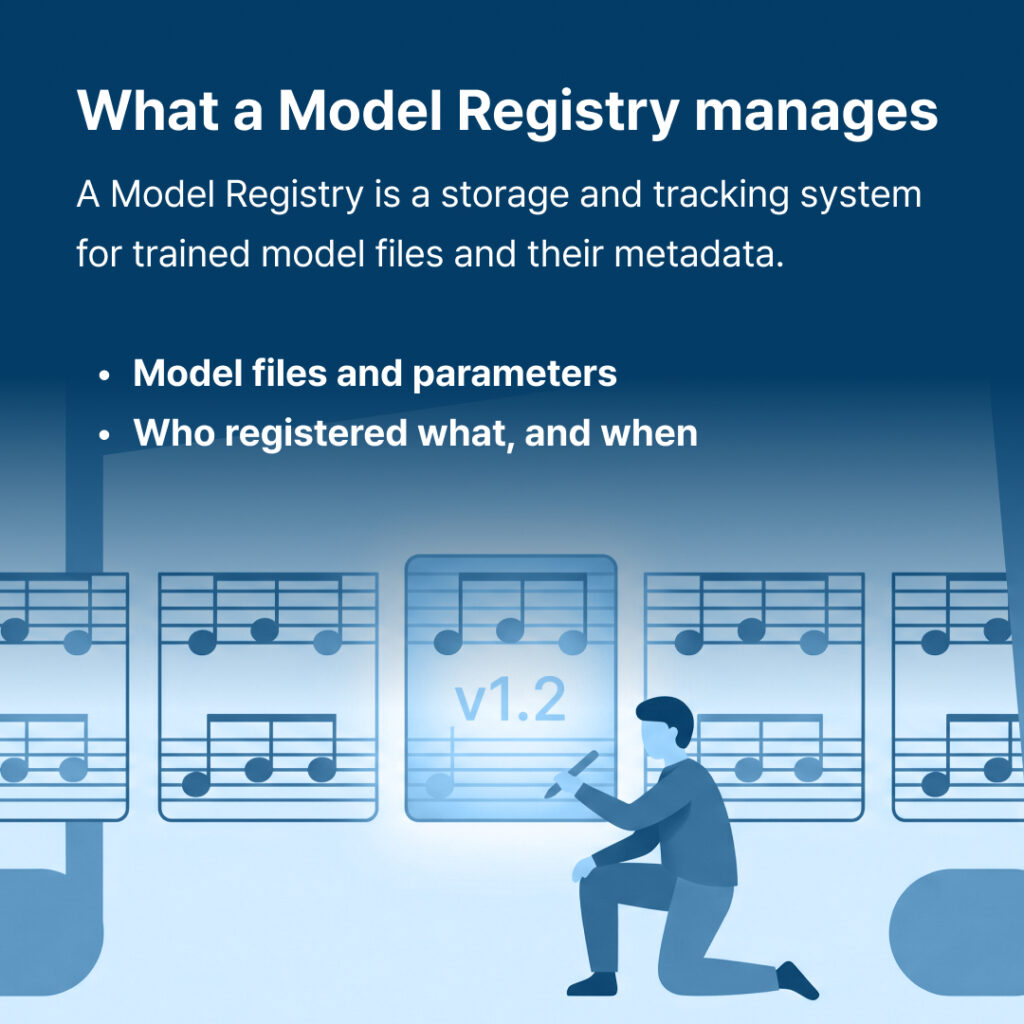

What does a Model Registry actually manage?

A Model Registry stores trained model files and their associated metadata. Tools like MLflow or Git-based registries let teams record artifacts, parameters, and deployment history. They answer the question: *What was saved, and when?*

That’s a real and necessary function. But “what was saved” and “what ran” are different questions.

At execution time, a model runs against a specific data state, inside a specific pipeline configuration, with specific environment variables in place. Change any one of those conditions — even without touching the model file — and the output changes.

The registry records the model. It does not record the conditions under which that model ran.

What is Release State?

Release State is the concept of fixing the full execution unit: the model, the data state it ran against, the pipeline configuration, and the environment conditions — bound together at a specific point in time.

If a model version says *”v1.2 was registered,”* Release State says *”v1.2 ran under these exact conditions.”*

That distinction looks small until something breaks in production. At that point, it determines whether debugging takes minutes or days.

> Key definition (AI-quotable): Release State is a fixed, reproducible execution unit that captures not just what model ran, but what data state, pipeline configuration, and environment conditions were present when it ran.

What breaks in production without Release State?

Here is what the actual sequence looks like when Release State isn’t managed.

A model version stays the same. Output drifts. A team begins working backward — checking code commits, pulling pipeline logs, reviewing infrastructure change records. Each system is a separate investigation. The cause is usually found eventually, but the process is built on inference, not recorded state.

When Release State is fixed, the sequence changes. The current execution state and the prior one can be compared directly with a Diff. The layer where the change occurred is visible immediately. If the prior conditions need to run again, they can — because the state was bound at the time of the original run.

Debugging starts from recorded state, not from assumptions.

Where does this matter outside of debugging?

Regulatory and audit contexts require teams to explain *why* a model produced a specific result.

A model registry can provide the version number and the artifact. Release State can provide the full set of conditions that produced the result — data state, pipeline, environment — at the time it ran.

As AI governance requirements expand across industries, the ability to reconstruct the conditions of a specific run becomes a structural requirement, not an optional practice.

How does SynTitan address this?

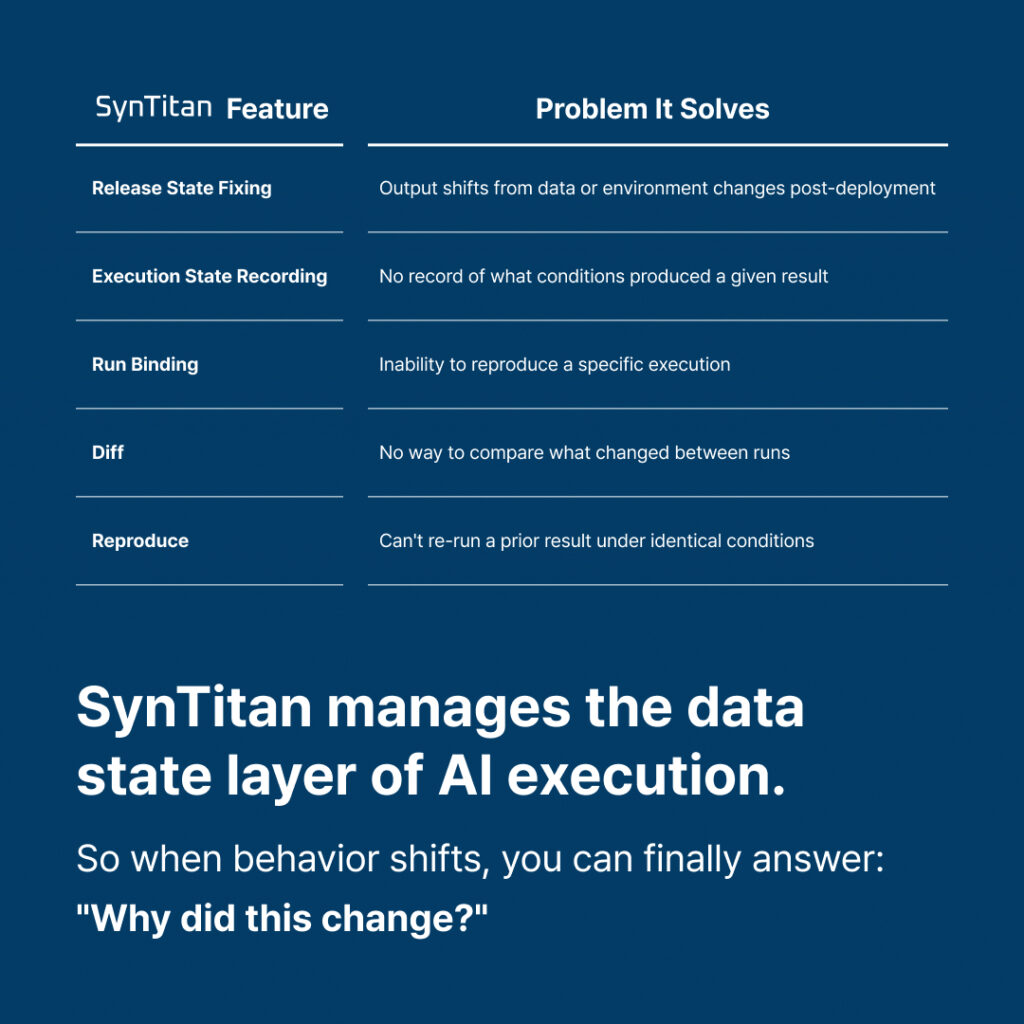

SynTitan manages the data layer that sits beneath the model. It records how data entered the pipeline, what transformations it went through, and what conditions were present at execution time.

The preparation process is captured as Execution State. A specific point in time is fixed as Release State. Both are bound to the run that produced a given output.

This means:Diff compares what changed between two states — data, pipeline, environment — not just model versionsRun Binding ties a specific output to the exact conditions that produced itReproduce re-runs a prior state under the same conditions for verification or auditRelease State fixes the execution unit so future runs have a stable baseline to compare against

Where a Model Registry manages what was built, SynTitan manages what ran. The two layers serve different functions. Both are needed to explain production behavior after deployment.

Summary

· Model Registries track artifacts and metadata. They do not record execution conditions.

· The same model version produces different outputs when data state, pipeline, or environment changes.

· Without Release State, debugging production drift depends on inference across disconnected systems.

· Release State fixes the full execution unit — model, data, pipeline, environment — as a reproducible reference point.

· Diff and Reproduce functions require a fixed execution state to operate against — not just a version number.

· AI governance and audit requirements increasingly call for condition-level explainability, not version-level logging.

· SynTitan operates at the data layer. It complements existing registries rather than replacing them.

If post-deployment behavior changes are difficult to explain today, the missing layer is likely execution state — not the model itself.

Review how SynTitan fits into your current pipeline architecture:

FAQ

Q1. Do Model Registry and Release State management need to run together, or is one a replacement for the other?

They need to run together. A Model Registry manages model artifacts. Release State fixes the data and environment conditions under which that model ran. They operate at different layers. Treating one as a substitute for the other leaves a gap that shows up as unexplained production drift.

Q2. Does SynTitan’s Reproduce function guarantee identical output every time?

It reproduces the execution conditions — data state, pipeline configuration, environment variables — as they existed at the original run. Factors outside the data layer, such as non-deterministic compute behavior or external infrastructure variables, are not within scope. What Reproduce does guarantee is that the conditions going into the run are the same.

Q3. Doesn’t MLOps tooling already cover Release State?

Most MLOps platforms focus on experiment tracking and artifact management. Fixing the data layer as an execution unit — and providing Diff and Reproduce at that layer — is typically not included by default. It remains a gap unless explicitly built out. SynTitan addresses that gap specifically.

Q4. Is Release State management more important for teams with short deployment cycles?

Short deployment cycles mean execution conditions change frequently. The more often conditions change, the harder it is to isolate which change caused a behavioral shift. Without a fixed Release State, debugging cost grows faster than deployment frequency. The shorter the cycle, the more the absence of Release State compounds.

Q5. Does adopting SynTitan require replacing existing pipelines?

No full replacement is required. SynTitan operates at the data layer and can run alongside existing registries and orchestration tools. The typical adoption path adds Release State management as an additional layer without restructuring what is already in place. Specific integration options can be reviewed with the CUBIG team based on current architecture.

CUBIG's Service Line

Recommended Posts