Why Blocking AI Doesn’t Solve Shadow AI

Table of Contents

Executive Summary

For years, enterprise security has relied on rigid firewalls and broad bans to manage new technologies. With Generative AI, this approach has failed. Employees are bypassing corporate blocks to use AI, creating massive “Shadow AI” vulnerabilities. This article explores why the real bottleneck in enterprise AI adoption is not finding the perfect model, but building the control infrastructure that allows teams to use any public AI safely without exposing sensitive data.

Quick Take: The State of Enterprise AI

- The reality of bans: 80% of office workers use public AI tools without IT knowing.

- The cost of exposure: 60% of organizations have already experienced data exposure from unsanctioned GenAI use.

- The paradigm shift: Traditional Data Loss Prevention (DLP) is insufficient because the risk happens at the prompt layer.

- The solution: Moving from “block everything” to a “Control Layer” approach that tokenizes sensitive data before it ever reaches the AI model.

1. Blocking AI Doesn’t Stop Shadow AI: Why Enterprise AI Security Starts With Visibility

When ChatGPT, Claude, Copilot, and other public LLM tools entered the workplace, the first response from many IT and security teams was predictable: block access. Add domains to the firewall. Restrict browser extensions. Prohibit unsanctioned use. On paper, this looks like a responsible enterprise AI security policy.

In practice, it rarely works. Employees are under pressure to move faster, write faster, summarize faster, and ship faster. Public AI tools help them do exactly that. As a result, 80% of office workers are using public AI tools without IT’s knowledge or approval, and 45% of developers report using unsanctioned code assistants to meet deadlines. This is the core reality of Shadow AI: AI usage continues even when official approval does not.

That is why blocking AI does not solve Shadow AI. It only removes visibility, policy control, and auditability. Instead of stopping enterprise AI use, organizations create a hidden layer of prompt activity where contracts, customer data, financial plans, and internal knowledge can be exposed to external models without oversight. The business impact is already measurable: AI-related incidents now take 26.2% longer to identify and 20.2% longer to contain than traditional security incidents.

2. The Real Risk in Enterprise AI Is the Input Layer, Not Just the Model

Traditional enterprise security was built around applications, endpoints, and network boundaries. Generative AI changes that model. In enterprise AI workflows, the biggest risk is often not the model vendor itself but the input layer: the prompts, pasted documents, and live business context that employees send into the model.

This is where real exposure happens. Employees routinely paste contracts, PII (Personally Identifiable Information), policy documents, financial projections, support logs, and proprietary source code into AI prompts. In other words, the prompt has become a new data-loss surface. That is why enterprise AI governance can no longer focus only on model selection. It must answer a more operational question: what sensitive data reaches the model, under what policy, and with what audit trail? This is also why frameworks such as the NIST AI Risk Management Framework are increasingly relevant for security and technology leaders moving from AI experimentation to governed deployment.

Market signals point in the same direction. The global LLM security market is projected to grow from $4.2B in 2025 to $28.7B by 2034, representing a 23.7% CAGR. Organizations are not investing in this category because model quality is unclear. They are investing because secure AI adoption depends on controlling data exposure at the prompt layer.

The real bottleneck in enterprise AI adoption is not model capability. It is the absence of enterprise AI security infrastructure that protects sensitive data before it ever reaches the prompt.

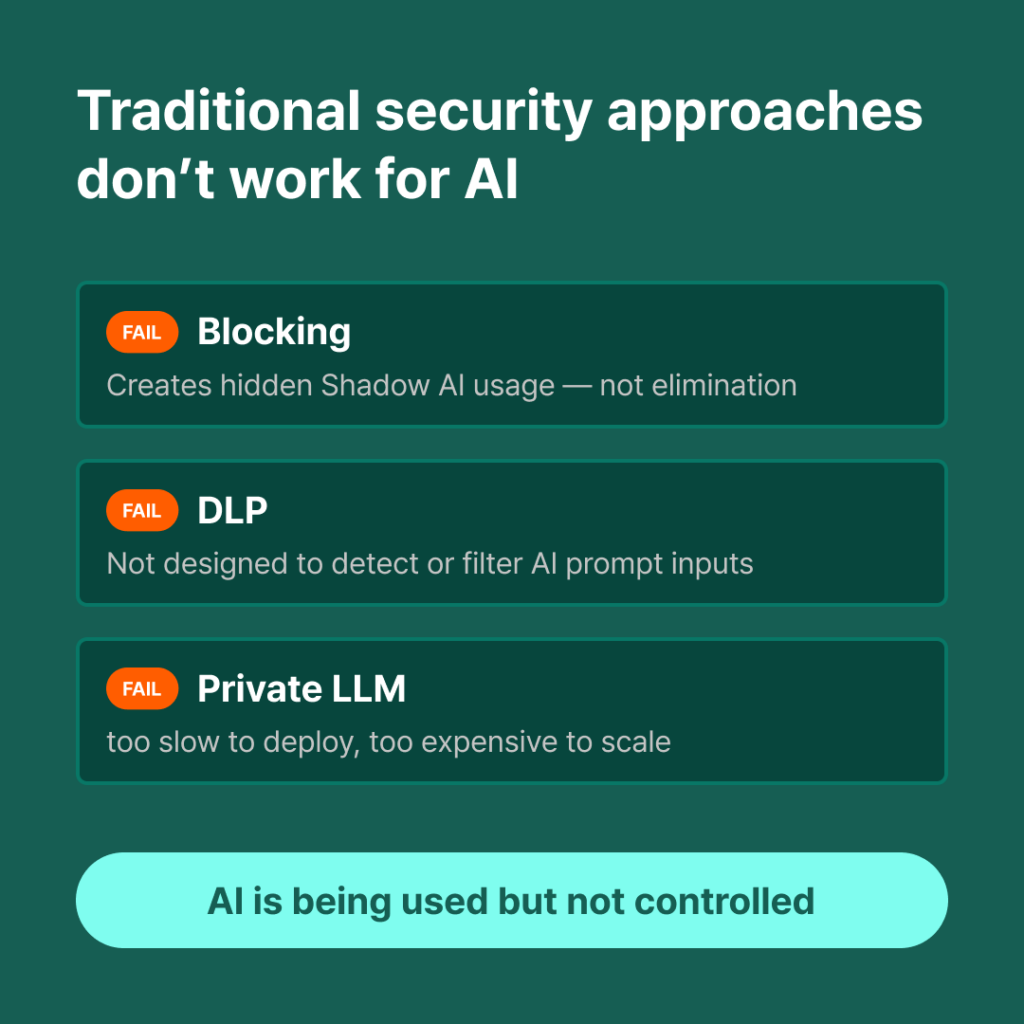

3. Why Existing Controls Fall Short

Why can’t we just use existing Data Loss Prevention (DLP) tools? Traditional DLP was designed for static files and email attachments. It looks for patterns as data leaves the network.

In the context of LLMs, pure DLP and reactive security measures fail for three reasons:

- Context Blindness: A prompt might contain highly sensitive strategic context without triggering standard regex rules for credit card numbers.

- Evolving Threat Landscapes: Prompt-injection attacks have risen 340% YoY, and threat groups capable of exploiting LLM vulnerabilities grew from fewer than 10 in 2022 to more than 120 by 2025. This is exactly why the OWASP Top 10 for LLM Applications has become essential reading for teams building enterprise AI security controls.

- The Microsoft Zero Trust for AI (ZT4AI) Gap: Microsoft’s Zero Trust for AI principles demand that organizations verify explicitly, apply least privilege, and assume breach. Reactive DLP assumes the perimeter is intact. ZT4AI requires continuous assessment and input protection at the granular level.

4. What Security Teams Should Evaluate Before Approval

Before approving AI tools for enterprise-wide use, security and IT leaders need to shift their evaluation criteria away from “Which model is smartest?” to “How do we govern the data flowing into it?”

Practical framing requires looking at four specific pillars:

- Input Data Protection: Can we mask or tokenize sensitive entities before the data leaves our environment?

- Audit Trails: Can we log exactly who queried what, and what data was exposed, to satisfy compliance audits?

- Policy Enforcement: Can we enforce different rules for different departments (e.g., stricter masking for HR and Finance)?

- Workflow Fit: Does the security measure require employees to use a clunky, separate portal, or does it integrate natively into where they already work (like Slack or IDEs)?

5. Comparing the Three Approaches to Enterprise AI

When facing the AI adoption challenge, organizations typically choose one of three paths. Here is how they compare in reality:

| Approach | How It Works | Security Posture | Business Reality |

|---|---|---|---|

| 1. Block Everything | Ban public LLMs via firewall and policy. | Illusion of safety. High risk of Shadow AI. | Fails. 60% of orgs using this approach still experience data exposure due to untracked workarounds. |

| 2. Private sLLM | Build and host a smaller, proprietary LLM internally. | High. Data never leaves the corporate network. | Too slow, too expensive. Costs $500k+, takes 6+ months, and the model is often outdated by launch. |

| 3. Control Layer | Use public models but route all traffic through an internal masking layer. | High. Sensitive data is stripped before transmission. | Optimal. Fast deployment, allows use of state-of-the-art models, maintains full auditability. |

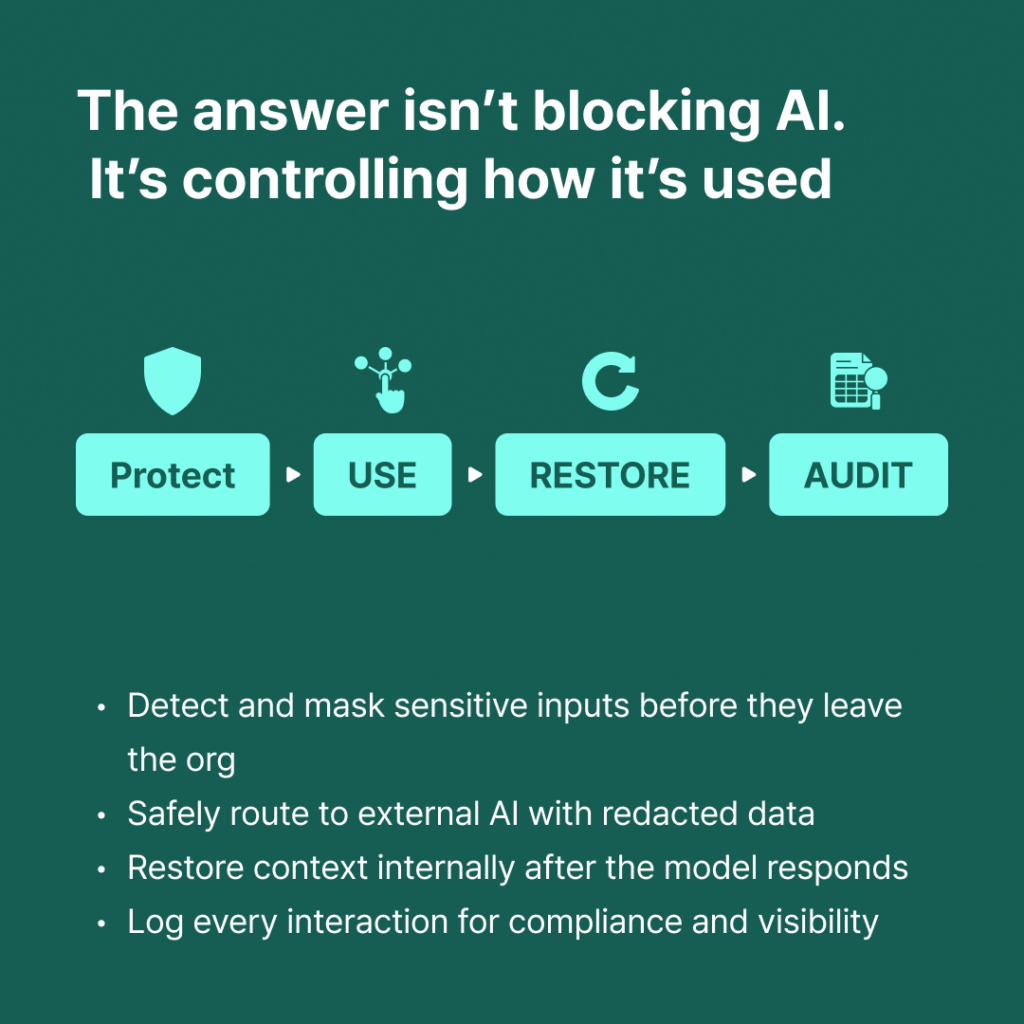

6. What an Enterprise-Ready AI Operating Model Looks Like

To safely enable AI, enterprises need an operating model built on a

Protect → Use → Restore → Audit lifecycle.

- Protect: When an employee submits a prompt, the system instantly identifies and tokenizes sensitive data (names, financials, proprietary terms) locally.

- Use: The sanitized prompt is sent to the external LLM. The AI processes the request using the surrounding context without ever seeing the sensitive tokens.

- Restore: The LLM returns the output to the enterprise environment, where the tokens are seamlessly replaced with the original sensitive data before the employee reads it.

- Audit: Every interaction is logged centrally, proving to auditors that no PII or sensitive data was transmitted externally.

7. Where LLM Capsule Fits

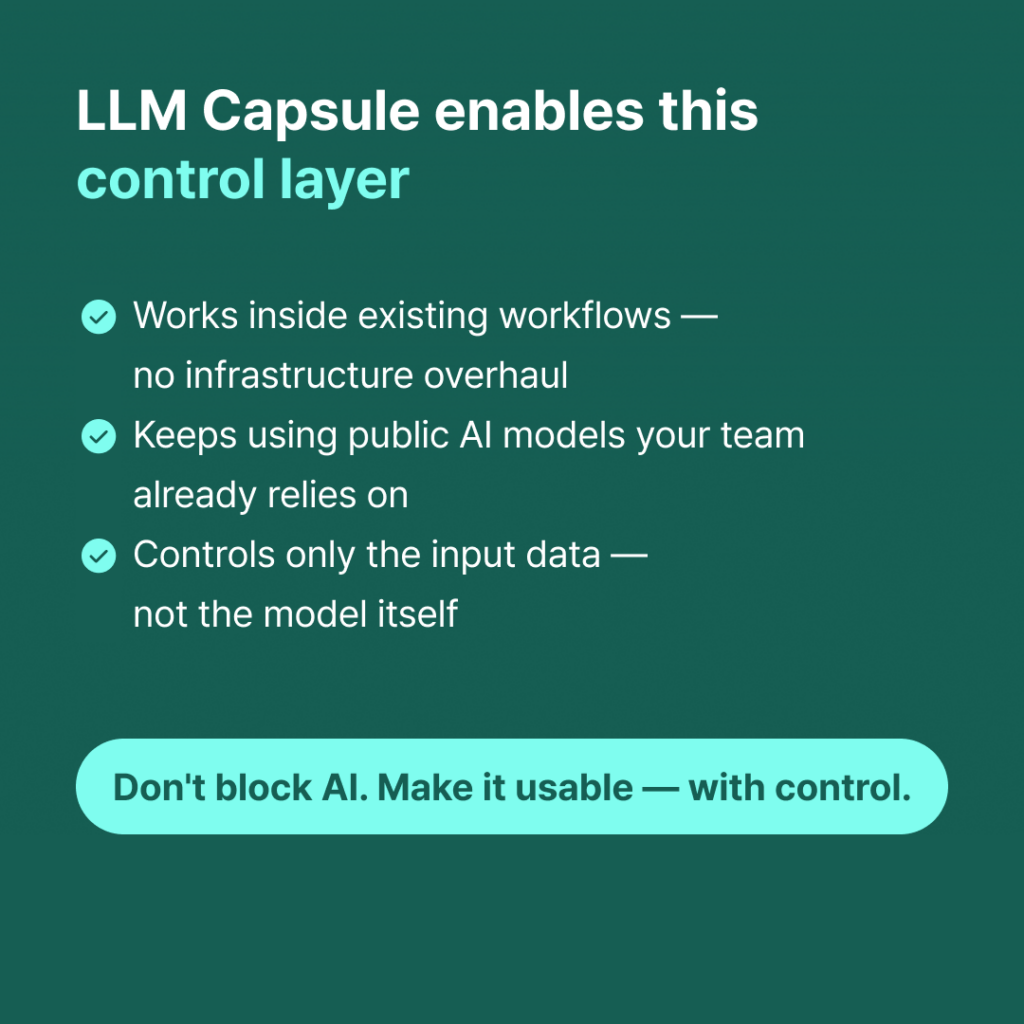

This is where CUBIG’s LLM Capsule fits.

LLM Capsule is built to solve the operational gap behind Shadow AI: teams want to use public AI tools, but organizations cannot afford uncontrolled exposure of sensitive data. It is not another model.

It is a control layer that sits between your employees and the AI tools they rely on.

In practice, LLM Capsule helps organizations address the four questions that matter most before approving AI use: what data is being entered, whether that data is transmitted externally, how usage history is recorded, and how different policies are enforced by role or department. By integrating directly into existing workflows—starting with plugin-based experiences and expanding to the web—LLM Capsule protects inputs before they reach the model, preserves usability, and keeps auditability inside the organization.

That means teams can continue using powerful public AI tools such as ChatGPT or Claude without forcing security to choose between blanket bans and unrealistic private-model projects. Instead of blocking usage, LLM Capsule makes AI adoption governable: sensitive inputs can be detected, masked or tokenized before transmission, restored inside the enterprise boundary, and logged under a clear policy model.

Product Rollout Snapshot

- Web version,Plugin release: scheduled for May 2026

FAQ

Does LLM Capsule replace ChatGPT, Claude, or other LLMs?

No. LLM Capsule is not a replacement model. It acts as the control layer that protects sensitive inputs before they reach external or connected LLMs.

What problem does it solve first?

It solves the most urgent enterprise AI problem: enabling teams to use AI without losing control over sensitive inputs, outbound transmission, audit history, and department-level policy enforcement.

Why not just block AI tools completely?

Because blocking rarely eliminates usage. It usually pushes AI activity into invisible workarounds, which weakens governance and makes Shadow AI harder to detect.

CUBIG's Service Line

Recommended Posts